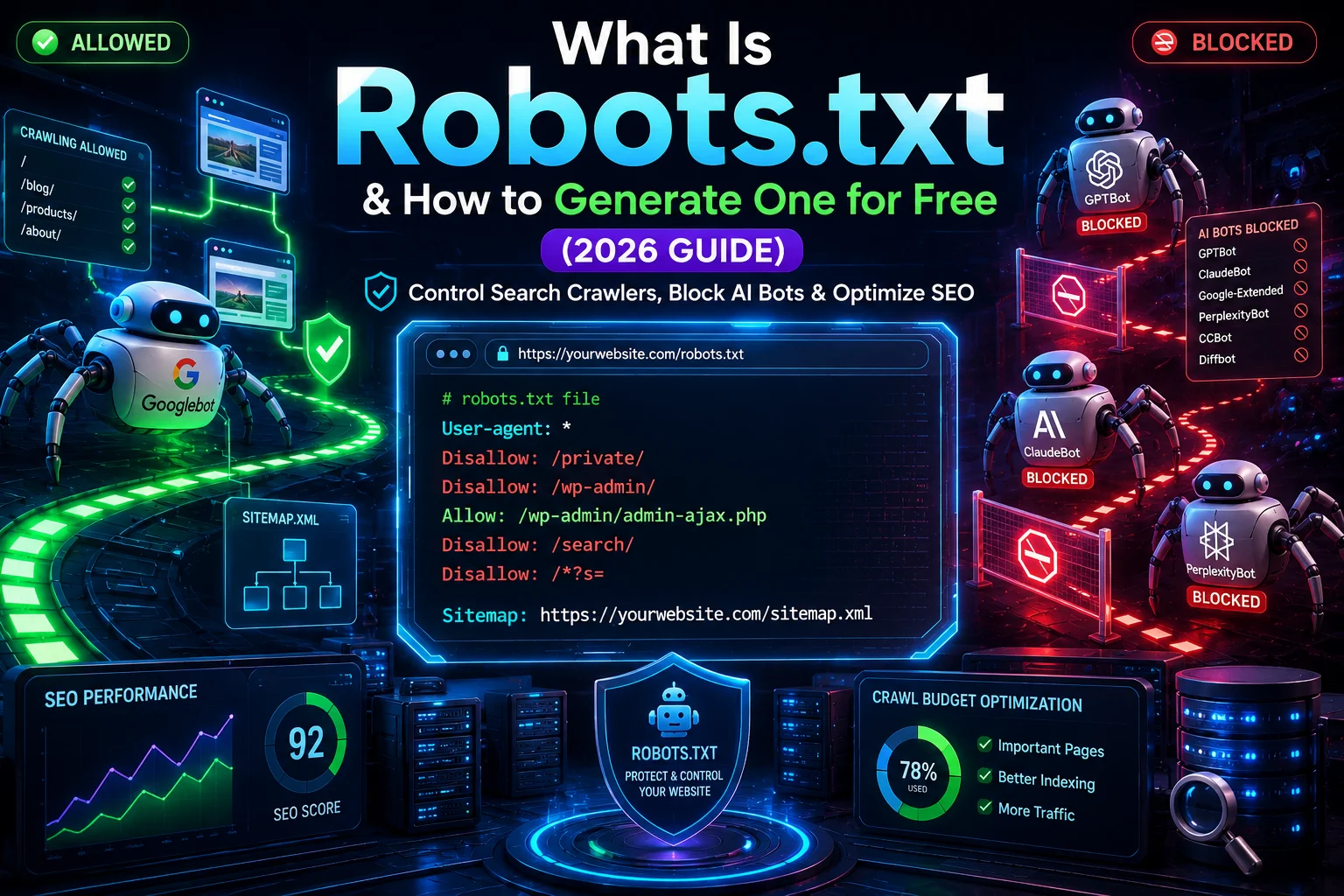

Robots.txt is a plain text file placed at the root of your website that tells search engine crawlers which pages they are allowed to access — and which ones to skip. It is one of the most fundamental technical SEO files on any website, yet it is also one of the most misunderstood. Get it wrong and you could accidentally block your entire site from Google. Get it right and you gain precise control over how crawlers navigate your content, protect sensitive pages, and optimize how search engines spend their time on your site.

In this guide, you will learn exactly what a robots.txt file is, how it works, what each directive means, and how to generate one for free in minutes — no coding skills required, no signup needed. You will also find 2026-specific guidance on blocking AI training bots like GPTBot and ClaudeBot, platform-by-platform setup instructions for WordPress, Shopify, and Wix, and a full list of common mistakes that silently kill your SEO without you realizing it.

Want to skip straight to creating yours? Use the free robots.txt generator on AllFileTools — no account required, no download, just generate and copy.

What Is a Robots.txt File?

A robots.txt file is a plain text file located at the root directory of a website (for example, yourwebsite.com/robots.txt) that communicates crawling instructions to search engine bots and other automated web crawlers. When a crawler like Googlebot visits your website, the very first thing it does before accessing any other page is check your robots.txt file — reading the instructions and deciding which areas of your site it is permitted to explore.

Think of it like a security guard at the entrance of an office building. The guard does not physically stop anyone, but they tell visitors which floors they can access and which ones are off-limits. Most visitors respect those instructions. Most crawlers respect robots.txt the same way.

What does robots.txt do?

Robots.txt does three things:

- Controls crawler access — it tells search engines which pages, directories, or file types they should crawl or skip

- Helps manage crawl budget — by blocking unimportant pages, you ensure crawlers spend their time on the pages that actually matter

- Points crawlers to your sitemap — most robots.txt files include the location of your XML sitemap, helping search engines discover content faster

It does NOT make pages private, secure, or invisible. Any page blocked in robots.txt can still appear in search results if another site links to it. If you need a page to be truly excluded from Google's index, you need the noindex meta tag.

Where is the robots.txt file located?

Robots.txt must always be placed at the root of your domain. That means:

https://yourwebsite.com/robots.txt— correcthttps://yourwebsite.com/blog/robots.txt— this will not workhttps://subdomain.yourwebsite.com/robots.txt— this only covers the subdomain, not the main domain

Every domain and subdomain needs its own robots.txt file if you want to control crawling separately.

What are robot txt files used for?

Common use cases include:

- Blocking admin areas (

/wp-admin/) from being crawled - Preventing duplicate content pages from being indexed

- Stopping crawlers from accessing staging or test environments

- Blocking AI training bots from scraping your content

- Reducing load on your server from excessive bot traffic

- Pointing crawlers to your XML sitemap

Why Robots.txt Matters for SEO

Robots.txt is not just a technical formality — it directly affects how search engines crawl and understand your website. Understanding this connection is key to using it effectively rather than accidentally working against yourself.

Is robots.txt necessary for SEO?

Robots.txt is not strictly required for a website to rank on Google. However, it is strongly recommended. Without a robots.txt file, search engine crawlers will attempt to access every page on your site, including admin panels, login pages, duplicate content, internal search result pages, and other pages you almost certainly do not want indexed. This wastes crawl budget and can dilute your SEO authority across pages that add no value.

For small websites with under 100 pages, the impact is minimal. For larger sites — especially e-commerce stores, news sites, or content-heavy platforms — a well-configured robots.txt file can make a meaningful difference in how efficiently search engines index your important content.

Crawl budget optimization with robots.txt

Crawl budget refers to the number of pages Googlebot will crawl on your website within a given time period. Google allocates crawl budget based on your site's authority and server performance. If your site has thousands of pages, Google may not crawl all of them — which means some of your best content might not get indexed.

By using robots.txt to block low-value pages — such as filtered product pages, session ID URLs, print versions of pages, or auto-generated tag pages — you free up crawl budget for the pages that actually need to be indexed. This is one of the most effective technical SEO strategies for large websites.

Pages worth blocking to conserve crawl budget:

/wp-admin/and other CMS admin directories- Faceted navigation URLs (e.g.,

/products?color=red&size=small) - Internal search result pages (e.g.,

/search?q=...) - Login and account pages

- Duplicate or thin content pages

- Staging or development environments

How search engine crawlers read robots.txt

When Googlebot, Bingbot, or any other crawler visits your site, it requests your robots.txt file first. It reads the file top to bottom and applies the rules that match its own user-agent name. If no specific rule matches the crawler, it falls back to the wildcard rule (User-agent: *).

Important: Google caches robots.txt files and refreshes them periodically — usually every 24 hours. If you make changes to your robots.txt file, it may take a day or two for Google to pick up the update. You can accelerate this using Google Search Console's URL Inspection tool.

Robots.txt Syntax Explained

Robots.txt uses a simple set of directives. Understanding each one is essential before you start editing or generating a file. A poorly written directive can block far more than you intended.

The robots exclusion protocol — the formal standard that defines how robots.txt works — specifies a handful of core directives that virtually all major crawlers support.

User-agent directive

The User-agent directive specifies which crawler the following rules apply to. Every rule block must start with a User-agent line.

User-agent: Googlebot

Use * (wildcard) to apply rules to all crawlers:

User-agent: *

You can have multiple User-agent blocks in one file — each followed by its own Allow and Disallow rules.

Allow and Disallow directives

Disallow tells a crawler which paths it must not access. Allow explicitly permits a path, even if a parent directory is disallowed.

User-agent: *

Disallow: /wp-admin/

Disallow: /private/

Allow: /wp-admin/admin-ajax.php

In this example, all crawlers are blocked from /wp-admin/ except for the admin-ajax.php file, which is needed for some WordPress front-end features to work correctly.

Rules to remember:

- A blank

Disallow:value means nothing is blocked — allow all Disallow: /means block everything — the entire site- Paths are case-sensitive:

/Blog/and/blog/are treated as different paths - Rules apply to paths, not individual files by default

Crawl-delay directive

The Crawl-delay directive tells crawlers how many seconds to wait between requests. This is useful if bot traffic is putting strain on your server.

User-agent: *

Crawl-delay: 10

Note: Google officially does not support Crawl-delay. To control Googlebot's crawl rate, use the crawl rate settings inside Google Search Console instead.

Sitemap directive

The Sitemap directive tells crawlers where to find your XML sitemap. Place it at the end of your robots.txt file:

Sitemap: https://yourwebsite.com/sitemap.xml

You can list multiple sitemaps if you have more than one:

Sitemap: https://yourwebsite.com/sitemap.xml

Sitemap: https://yourwebsite.com/news-sitemap.xml

How to add sitemap to robots.txt: simply add the full absolute URL of your sitemap as its own line, using the Sitemap: prefix. This is one of the easiest and most impactful things you can do to help search engines discover your content.

5 Copy-Paste Robots.txt Templates

Below are five ready-to-use robots.txt examples covering the most common scenarios. Copy the one that fits your situation and customize as needed.

Template 1: Allow all crawlers (default open access)

# Allow all crawlers to access everything

User-agent: *

Disallow:

Sitemap: https://yourwebsite.com/sitemap.xml

Use this when you want full crawl access with no restrictions. The blank Disallow: line tells crawlers everything is permitted.

Template 2: Block all crawlers (full site lockdown)

# Block all crawlers from accessing the entire site

User-agent: *

Disallow: /

Use this for staging sites, development environments, or any site you do not want indexed under any circumstances. Do not use this on a live production site unless you intentionally want to disappear from search results.

Template 3: Standard WordPress robots.txt example

# WordPress standard robots.txt

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

Disallow: /wp-content/plugins/

Disallow: /?s=

Disallow: /search

Sitemap: https://yourwebsite.com/sitemap_index.xml

This is a solid starting point for most WordPress sites. It blocks admin areas and internal search pages while allowing everything else.

Template 4: Shopify robots.txt example

Shopify automatically generates a robots.txt file for all stores. As of 2023, Shopify allows merchants to customize it using the robots.txt.liquid template. A standard Shopify file looks like this:

User-agent: *

Disallow: /a/downloads/-/*

Disallow: /admin

Disallow: /cart

Disallow: /orders

Disallow: /checkouts/

Disallow: /checkout

Disallow: /53d34b03-94a4-406a-8494-2ad9e4cd4ba0

Disallow: /carts

Disallow: /account

Disallow: /collections/*sort_by*

Disallow: /*/collections/*sort_by*

Disallow: /collections/*+*

Disallow: /collections/*%2B*

Disallow: /collections/*%2b*

Disallow: /*/collections/*+*

Disallow: /*/collections/*%2B*

Disallow: /*/collections/*%2b*

Disallow: /blogs/*+*

Disallow: /blogs/*%2B*

Disallow: /blogs/*%2b*

Disallow: /*/blogs/*+*

Sitemap: https://yourstore.myshopify.com/sitemap.xml

Template 5: Block all AI training bots (2026 standard)

# Block all major AI training crawlers

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: CCBot

Disallow: /

User-agent: PerplexityBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: Applebot-Extended

Disallow: /

User-agent: Diffbot

Disallow: /

User-agent: Omgilibot

Disallow: /

# Allow normal search engine crawlers

User-agent: *

Disallow:

Sitemap: https://yourwebsite.com/sitemap.xml

This template blocks all major AI training crawlers while keeping your site fully accessible to Google, Bing, and other traditional search engines.

How to Block AI Crawlers in Robots.txt (GPTBot, ClaudeBot, PerplexityBot)

One of the biggest developments in the robots.txt space in 2025 and 2026 has been the rise of AI crawler blocking. As large language models like ChatGPT, Claude, and Perplexity built massive training datasets by crawling the web, many publishers began asking: do I want my content used to train these systems?

The answer depends on your goals. But the good news is that you have full control — and the major AI companies have published official user-agent strings that you can use to block their crawlers via robots.txt.

Complete AI crawler user-agent table (2026)

| Bot Name | Company | User-agent string | Blocks training? |

|---|---|---|---|

| GPTBot | OpenAI | GPTBot |

Yes — OpenAI training data |

| ClaudeBot | Anthropic | ClaudeBot |

Yes — Anthropic training data |

| Google-Extended | Google-Extended |

Yes — Gemini AI training | |

| PerplexityBot | Perplexity AI | PerplexityBot |

Yes — Perplexity AI crawling |

| Applebot-Extended | Apple | Applebot-Extended |

Yes — Apple AI training |

| CCBot | Common Crawl | CCBot |

Yes — open crawl dataset used by many AI models |

| Diffbot | Diffbot | Diffbot |

Yes — AI data extraction |

| omgili / Omgilibot | Webhose | omgili, Omgilibot |

Yes — media monitoring / AI training |

| FacebookExternalHit | Meta | FacebookExternalHit |

Used for link previews (not AI training specifically) |

| Bytespider | ByteDance | Bytespider |

Yes — TikTok/ByteDance AI crawling |

Should you block AI crawlers? (decision guide)

Block AI crawlers if:

- You are a publisher, news site, or content creator who relies on your content's uniqueness as a business asset

- You do not want your writing style, images, or research used to train commercial AI products without compensation

- You are concerned about AI-generated summaries replacing clicks to your actual site

Allow AI crawlers if:

- You want your content to appear in AI-generated answers (this can drive brand awareness)

- You run a documentation site or knowledge base that benefits from broad exposure

- You are not primarily competing on content uniqueness

A middle-ground approach: Block training-specific bots (GPTBot, ClaudeBot, CCBot, Google-Extended) but allow search-focused bots (PerplexityBot if they send traffic, Applebot for Apple Search). Each company publishes its bot's behavior — check their documentation for current policies.

Copy-paste: block all AI training bots

Use Template 5 from Section 4 above. Or combine blocking with selective allowing:

# Block OpenAI training but allow ChatGPT browsing

User-agent: GPTBot

Disallow: /

# Block Anthropic training crawler

User-agent: ClaudeBot

Disallow: /

# Block Google's AI training (does not affect normal Google Search)

User-agent: Google-Extended

Disallow: /

# Allow all standard search engine bots

User-agent: *

Disallow:

Important: blocking Google-Extended does NOT affect how Googlebot crawls your site or how you rank in Google Search. They are separate crawlers with separate purposes.

Ready to build your custom robots.txt with AI bot controls? The AllFileTools Robots.txt Generator lets you select which bots to block with a single click — no coding required.

Robots.txt vs Noindex — Key Differences Explained

One of the most common points of confusion in technical SEO is the difference between robots.txt and the noindex meta tag. Both are used to control what search engines do with your pages — but they work in completely different ways and should never be confused.

The core difference: robots.txt controls whether a crawler can visit a page. Noindex controls whether a page can be shown in search results.

When to use robots.txt vs noindex

| Factor | Robots.txt | Noindex meta tag |

|---|---|---|

| What it controls | Whether the crawler can access the page | Whether the page appears in search results |

| How it works | Crawler reads the file before visiting any page | Crawler must visit the page to read the tag |

| Effect on indexing | Does NOT guarantee page won't be indexed | Directly instructs Google not to index the page |

| Effect on crawling | Stops the crawler from visiting the page | Crawler still visits, reads, and processes the page |

| Can Google still index a blocked page? | Yes — if another site links to it | No — noindex is a hard instruction |

| Best for | Admin areas, private directories, large sections of thin content | Individual pages you don't want in search results |

| What NOT to use it for | Hiding sensitive data (it's public!) | Stopping crawlers from consuming crawl budget |

| Syntax example | Disallow: /page-url/ |

<meta name="robots" content="noindex"> |

The critical mistake to avoid

Never use robots.txt to try to deindex a page that already has backlinks. If Google cannot crawl a page, it cannot see your noindex tag either. This creates a situation where Google knows the page exists (from links) but cannot verify you want it removed. The page can still appear as a URL-only result in search.

If you want a page completely removed from Google:

- Keep the page accessible (do not block in robots.txt)

- Add

<meta name="robots" content="noindex, nofollow">to the page - Submit a removal request via Google Search Console if you need it gone urgently

How to Generate a Robots.txt File for Free

Creating a robots.txt file by hand is possible, but time-consuming and error-prone. A single typo in a path or directive can accidentally block crawlers from your most important pages. The faster, safer approach is to use a free online robots.txt generator — no signup, no download, no coding required.

The AllFileTools Robots.txt Generator is a fully free, no-account-required tool that lets you build a custom robots.txt file in under two minutes. Here is how to use it step by step.

Step 1: Set your default crawler behavior

Open the robots.txt generator. The first setting controls how the tool applies to all bots using the wildcard User-agent: *. Choose whether you want to allow or disallow all crawlers by default. For most websites, allow all as the default and then restrict specific bots or directories.

Step 2: Configure specific bots and paths

Add the specific crawlers you want to control. The tool supports all major search engines (Googlebot, Bingbot, Yandexbot, DuckDuckBot) as well as AI training crawlers including GPTBot, ClaudeBot, PerplexityBot, and Google-Extended.

For each bot, you can set Allow or Disallow paths. Common paths to disallow:

/wp-admin/for WordPress admin/cart/and/checkout/for e-commerce/searchfor internal search result pages/private/or any directory holding sensitive files

Step 3: Add your sitemap URL and download

Enter your sitemap URL in the Sitemap field (for example, https://yourwebsite.com/sitemap.xml). The tool adds it automatically at the end of the file in the correct format.

Click generate and your robots.txt file is ready. Copy the output and paste it directly into your website root, or download it as a .txt file. No signup. No email required. No limits.

Validate your file with a robots.txt checker tool

After uploading your file, always verify it is working correctly. You can use the robots.txt checker in Google Search Console (go to Settings → Crawl stats → Open report → robots.txt) to test specific URLs against your file and confirm whether Googlebot would be allowed or blocked.

The AllFileTools Robots.txt Generator also shows you a live preview of the output as you configure settings, so you can spot any issues before downloading.

How to Add Robots.txt to Your Website

Once you have generated your robots.txt file, you need to upload it to the correct location on your server. The exact steps depend on which platform or CMS you are using. Here is how to add robots.txt on the most popular platforms.

How to add robots.txt on WordPress (using Yoast SEO)

WordPress is the easiest platform for managing robots.txt, especially with the Yoast SEO plugin installed:

- In your WordPress dashboard, go to SEO → Tools

- Click File Editor

- You will see your current robots.txt content in the editor (or a blank file if none exists)

- Paste your generated robots.txt content and click Save Changes to Robots.txt

If you are not using Yoast, you can edit the file directly via FTP:

- Connect to your server using an FTP client like FileZilla

- Navigate to your website's root directory (the same folder that contains

/wp-content/) - Upload your

robots.txtfile here — if one already exists, replace it - Verify by visiting

yourdomain.com/robots.txtin your browser

How to find robots.txt file in WordPress: If you are using Yoast, the file is managed virtually and edited through the plugin. Without Yoast, look for it in your WordPress root directory via FTP or your hosting file manager.

How to access robots.txt in WordPress: Navigate directly in your browser to yoursite.com/robots.txt to view what is currently live. To edit it, use Yoast SEO → Tools → File Editor.

How to add robots.txt on Shopify

Shopify automatically generates a robots.txt file for every store. As of 2023, you can customize it using the robots.txt.liquid template:

- From your Shopify admin, go to Online Store → Themes

- Click Actions → Edit Code on your active theme

- Under the Templates section, click Add a new template

- Select

robots.txtfrom the dropdown and click Create template - Edit the

robots.txt.liquidfile to customize your rules - Click Save

If you only want to add extra disallow rules without fully customizing the template, you can use the {% for rule in robots.default_groups %} approach to extend the default file. Shopify's documentation covers this in detail.

How to edit robots.txt in Shopify: Go to Online Store → Themes → Actions → Edit Code → Templates → robots.txt.liquid.

How to update robots.txt in Shopify: Edit the robots.txt.liquid template and save. Changes take effect immediately.

How to add robots.txt on Wix and Webflow

Wix: Wix automatically manages robots.txt for all sites. You cannot directly edit the file, but you can connect your site to Google Search Console and manage crawling preferences from there. Wix does respect standard robots.txt rules for bots that check it.

Webflow: In Webflow, go to Project Settings → SEO and paste your robots.txt content directly into the Robots.txt field. Webflow will serve it automatically at yourdomain.com/robots.txt.

How to add robots.txt in HTML (static sites): Simply place your robots.txt file in the root directory of your web server. If your site is hosted on cPanel, log into File Manager, navigate to public_html, and upload the file directly. Access it via FTP or your hosting control panel.

7 Common Robots.txt Mistakes to Avoid

These are the seven robots.txt mistakes that developers and site owners make most often — and some of them are catastrophic for SEO.

Mistake 1: Accidentally blocking your entire site

The most dangerous mistake in robots.txt history:

# DO NOT USE THIS ON A LIVE SITE

User-agent: *

Disallow: /

This single line blocks all crawlers from accessing every page on your website. Google will stop indexing your content. Your rankings will drop. This is surprisingly common — it often happens when developers copy a robots.txt file from a staging environment and forget to update it before launch.

How to fix it: Check your robots.txt immediately after any site migration, redesign, or CMS change. Visit yoursite.com/robots.txt and verify the Disallow line does not contain just a forward slash.

Mistake 2: Using noindex inside robots.txt (it does not work)

Robots.txt does not support meta tags or HTML directives. This line does absolutely nothing:

# THIS DOES NOT WORK

User-agent: *

Noindex: /page/

The noindex directive must be placed inside the <head> of the actual HTML page as a meta tag, or delivered via the X-Robots-Tag HTTP header. Writing noindex in robots.txt is silently ignored by all major crawlers.

Mistake 3: Blocking CSS and JavaScript files

Google needs to access your CSS and JS files to render your pages and understand their content. If you block them, Google sees a broken version of your site — similar to what it looked like in the early 2000s — and cannot properly evaluate your content or user experience.

Do not add:

# This hurts your SEO

Disallow: /wp-content/themes/

Disallow: /wp-content/plugins/

Unless you have a very specific reason, leave CSS and JS files accessible to crawlers.

Mistake 4: Case-sensitivity errors

Robots.txt paths are case-sensitive. /Blog/ and /blog/ are treated as completely different paths. If your blog lives at /blog/ but you write Disallow: /Blog/, the rule will not apply and your blog will still be crawled.

Always check the exact casing of your URLs before adding them to robots.txt.

Mistake 5: Missing the trailing slash on directory paths

There is a significant difference between these two rules:

Disallow: /private # Blocks /private but not /private/

Disallow: /private/ # Blocks /private/ and everything inside it

When blocking a directory, always include the trailing slash. Without it, you might only block the index page and miss all the subdirectories and files inside it.

Mistake 6: Relying on robots.txt for security

Robots.txt is a public file. Any person — not just crawlers — can visit yourwebsite.com/robots.txt and see every directory you have listed. If you disallow /admin-secret-backup/, you have just advertised the existence of that directory to anyone who looks.

Never use robots.txt to "hide" sensitive areas. Use proper authentication, .htaccess password protection, or IP restrictions for actual security. Robots.txt is a courtesy instruction to well-behaved bots, not a security mechanism.

Mistake 7: Forgetting to update robots.txt after a site redesign

When you relaunch a site, restructure your URLs, or migrate to a new platform, your existing robots.txt rules may no longer match your current URL structure. Old Disallow rules might block pages that now have different paths, or fail to block pages that have moved to new locations.

After any significant site change, audit your robots.txt file from scratch. Use Google Search Console's URL testing tool to verify that your important pages are accessible and your disallowed pages are properly blocked.

Robots.txt FAQ

What is robots.txt used for?

Robots.txt is used to tell search engine crawlers which pages or directories on your website they are allowed to access. It helps manage crawl budget, prevent duplicate or sensitive content from being crawled, and point bots to your XML sitemap. It does not directly control what appears in search results — that is the job of the noindex meta tag.

What is robots.txt in SEO?

In SEO, robots.txt is a technical tool used to control how search engine crawlers interact with your website. It is part of technical SEO strategy and directly affects crawl budget management, duplicate content prevention, and the efficiency with which search engines discover your important pages. A well-configured robots.txt file supports your overall SEO by ensuring crawlers focus their attention on content that matters.

What is a robots.txt file?

A robots.txt file is a plain text document placed at the root of your website (at yoursite.com/robots.txt) that follows the Robots Exclusion Protocol. It contains a series of rules — called directives — that tell web crawlers which areas of your site to crawl and which to skip.

Is robots.txt necessary for SEO?

Robots.txt is not strictly required — but it is strongly recommended for any site that cares about SEO. Without it, crawlers will attempt to access every URL on your site, including admin pages, duplicate content, and search result pages, which wastes your crawl budget. For large or complex sites, robots.txt is essential for efficient indexing.

What does robots.txt disallow do?

The Disallow directive in robots.txt tells a crawler not to access a specific path or directory. For example, Disallow: /private/ tells all bots to skip everything under the /private/ folder. Crawlers that follow the Robots Exclusion Protocol (including Google, Bing, and most major search engines) will respect this instruction and not visit those URLs.

Can robots.txt block AI crawlers?

Yes. All major AI companies — including OpenAI, Anthropic, Google, Perplexity, and Apple — have published official user-agent strings for their training crawlers. You can block them using their specific user-agent names: GPTBot (OpenAI), ClaudeBot (Anthropic), Google-Extended (Google Gemini training), PerplexityBot, and Applebot-Extended. Blocking these crawlers prevents your content from being used to train AI models.

What is the difference between robots.txt and noindex?

Robots.txt controls whether a crawler can visit a page. The noindex meta tag controls whether a page can appear in search results. The key distinction: if you block a page in robots.txt, Google cannot read the noindex tag on that page — meaning a blocked page can still show up as a URL in search results if other sites link to it. Use robots.txt to control crawling, and noindex to control indexing.

Where should I put my robots.txt file?

Robots.txt must be placed at the root domain level, accessible at https://yourdomain.com/robots.txt. It cannot be in a subdirectory. If you have multiple subdomains, each one needs its own robots.txt file if you want to set separate crawling rules for each.

Do I need a robots.txt file for a small website?

For very small sites (under 20–30 pages), robots.txt has minimal impact on SEO. However, it is still good practice to have one — at minimum to block admin directories and include your sitemap URL. It takes less than two minutes to generate one using the free AllFileTools Robots.txt Generator.

How do I generate a robots.txt file for free?

Use the AllFileTools Robots.txt Generator — completely free, no signup required. Select your crawler preferences, add your disallow paths, include your sitemap URL, and copy the generated file. The entire process takes under two minutes.

How do I check if a website has a robots.txt file?

Simply visit https://domain.com/robots.txt in your browser. If a robots.txt file exists, you will see it as plain text. If the site returns a 404 error, no robots.txt file is present. You can also use Google Search Console's robots.txt tester to check the file for your own website.

How to check robots.txt is working or not?

In Google Search Console, go to Settings → Crawl stats → Open report → robots.txt. This shows you the current cached version of your robots.txt and lets you test individual URLs to see whether they would be allowed or blocked. You can also use third-party tools like robots.txt validators to test your file.

How to fix "indexed though blocked by robots.txt"?

This Google Search Console warning means Google found and indexed a page that you are blocking in robots.txt. The fix depends on your goal:

- If you DO want the page indexed: Remove the Disallow rule from robots.txt and resubmit the URL in Search Console.

- If you do NOT want the page indexed: Remove the Disallow rule from robots.txt so Google can access the page, then add a

<meta name="robots" content="noindex">tag to the page itself. Once Google recrawls and sees the noindex tag, it will remove the page from its index.

Never block a page in robots.txt and try to noindex it at the same time — the two instructions conflict with each other.

How to remove robots.txt file from website?

To remove a robots.txt file, delete it from your server root directory via FTP, your hosting file manager, or your CMS (for example, Yoast SEO's File Editor in WordPress). If you want to keep the file but allow all crawlers, replace the content with:

User-agent: *

Disallow:

This effectively removes all restrictions while keeping the file in place.

How to disable robots.txt in WordPress?

In WordPress with Yoast SEO installed, go to SEO → Tools → File Editor and clear all Disallow rules, or replace the content with an open allow-all file. You can also disable Yoast's robots.txt management entirely in Yoast settings if you prefer to manage the file manually via FTP.

How to add sitemap to robots.txt?

At the end of your robots.txt file, add a line beginning with Sitemap: followed by the full URL of your sitemap:

Sitemap: https://yourwebsite.com/sitemap.xml

You can add multiple Sitemap lines if you have more than one sitemap file. This is one of the easiest ways to help search engines discover your content.

What is custom robots.txt in Blogger?

In Blogger (Google's blogging platform), the custom robots.txt setting allows you to replace the default robots.txt file with your own custom content. Go to Settings → Crawlers and indexing → Custom robots.txt, enable it, and paste your custom robots.txt content. By default, Blogger generates a basic robots.txt automatically.

How to submit robots.txt to Google?

You do not need to manually submit robots.txt to Google. Google's crawler automatically checks your robots.txt file whenever it crawls your site. However, you can speed up the process of Google recognizing changes using Google Search Console: go to URL Inspection, enter your domain, and request a reindex. You can also use the robots.txt tester in Google Search Console to confirm Google is reading the correct version of your file.

Why is robots.txt important?

Robots.txt is important because it gives you direct control over how search engine crawlers behave on your website. Without it, crawlers make their own decisions about which pages to visit, potentially wasting crawl budget on low-value pages, accessing admin areas, or crawling duplicate content. A well-configured robots.txt file is a foundational part of any technical SEO strategy.

How does robots.txt work?

When a crawler like Googlebot arrives at your website, the very first thing it does is request yourdomain.com/robots.txt. It reads the file, identifies the rules that apply to its user-agent, and then crawls your site according to those rules. Crawlers that follow the Robots Exclusion Protocol — which includes all major search engines and most legitimate bots — will respect the file. Malicious bots and scrapers typically ignore robots.txt entirely, which is why it should never be used as a security measure.

How to view robots.txt of a website?

To view any website's robots.txt file, add /robots.txt to the end of the root domain in your browser. For example: https://google.com/robots.txt or https://amazon.com/robots.txt. These are public files — any website can read another website's robots.txt.

How to write a robots.txt file?

A robots.txt file is written in plain text. Each rule block starts with User-agent:, followed by Allow: or Disallow: directives. End with your Sitemap: URL. Follow the Robots Exclusion Protocol syntax exactly — no HTML, no quotes around values, and one directive per line. Alternatively, use the free AllFileTools Robots.txt Generator to generate a correctly formatted file without writing any code.

Final Thoughts

A properly configured robots.txt file is one of the simplest yet highest-impact technical SEO improvements you can make to any website. Whether you are managing a blog, an e-commerce store, or a large content platform, getting your robots.txt right ensures search engines spend their time on your best content — not your admin panels, checkout pages, or duplicate URLs.

For 2026, the single most important new addition to any robots.txt file is your AI crawler strategy. Decide whether you want to block training bots like GPTBot and ClaudeBot, add the appropriate rules, and take control of how your content is used by AI systems.

And if you want to skip the manual work entirely — generate your robots.txt file free at AllFileTools. It takes under two minutes, requires no account, and gives you a correctly formatted, fully customizable file ready to upload.

Leave a Comment

No comments yet. Be the first to comment!